Welcome Ruohan Li to join our group as a research assistant!

We are pleased to welcome Ruohan Li, who recently joined our group as a research assistant. Ruohan Li holds a Bachelor’s degree in Economics and Finance from the University of Toronto and a Master’s degree in Business Analytics from Ivey Business School at Western University.

Research paper accepted by Transportation Research Part E

Understanding causal relationships between traffic states throughout the system is of great significance for enhancing traffic management and optimization in urban traffic networks. Unfortunately, few studies in the literature have systematically analyzed causal structure characterizing the evolution of traffic states over time and gauged the importance of traffic nodes from a causal perspective, particularly in the context of large-scale traffic networks. Moreover, the dynamic nature of traffic patterns necessitates a robust method to reliably discover causal relationships, which are often overlooked in existing studies. To address these issues, we propose a Spatio-Temporal Causal Structure Learning and Analysis (STCSLA) framework for analyzing large-scale urban traffic networks at a mesoscopic level from a causal lens. The proposed framework comprises three main components: decomposition of spatio-temporal traffic data into localized traffic subprocesses; a Bayesian Information Criterion-guided spatio-temporal causal structure learning combined with temporal-dependencies preserving sampling for deriving reliable causal graph to uncover time-lagged and contemporaneous causal effects; establishing several causality-oriented indicators to identify causally critical nodes, mediator nodes, and bottleneck nodes in traffic networks. Experimental results on both a synthetic dataset and the real-world Hong Kong traffic dataset demonstrate that the proposed STCSLA framework accurately uncovers time-varying causal relationships and identifies key nodes that play various causal roles in influencing traffic dynamics. These findings underscore the potential of the proposed framework to improve traffic management and provide a comprehensive causality-driven approach for analyzing urban traffic networks.

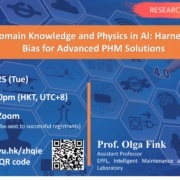

Prof. Olga Fink gave a talk on “Integrating Domain Knowledge and Physics in AI: Harnessing Inductive Bias for Advanced PHM Solutions”

In the field of prognostics and health management, the integration of machine learning has enabled the development of advanced predictive models that ensure the reliable and safe operation of complex assets. However, challenges such as sparse, noisy, and incomplete data necessitate the integration of prior knowledge and inductive bias to improve model generalization, interpretability, and robustness.

Inductive bias, defined as the set of assumptions embedded in machine learning models, plays a crucial role in guiding these models to generalize effectively from limited training data to real-world scenarios. In PHM applications, where physical laws and domain-specific knowledge are fundamental, the use of inductive bias can significantly enhance a model’s ability to predict system behavior under diverse operating conditions. By embedding physical principles into learning algorithms, inductive bias reduces the reliance on large datasets, ensures that model predictions are physically consistent, and enhances both the generalizability and interpretability of the models.

This talk will explore various forms of inductive bias tailored for PHM systems, with a particular focus on heterogenous-temporal graph neural networks, as well as physics-informed and algorithm-informed graph neural networks. These approaches will be applied to virtual sensing, modelling multi-body dynamical systems and anomaly detection.